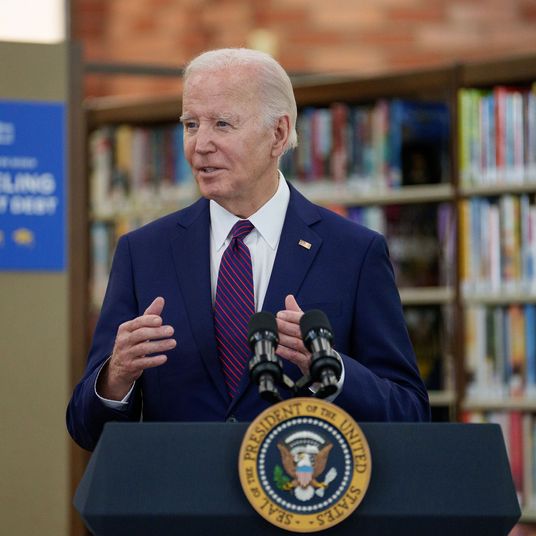

Earlier this week at South by Southwest, YouTube CEO Susan Wojcicki announced that the video site will use excerpts from Wikipedia to counteract videos promoting conspiracy theories. This new feature is, at first glance (and really, on second and third glances, too), odd. A megacorporation with billions of dollars and thousands of brilliant employees is … relying on a volunteer-run platform anyone can edit to fact-check information?

But the fact that YouTube sees Wikipedia as a reliable source is also, in a sense, a total validation of Wikipedia’s mission. A encyclopedia, open to edits from anyone, could easily have been misused and abused. Instead, it’s become the default place to find facts online. Even Google knows this: When you search for certain things, Google will pull information “snippets” about those terms from Wikipedia. And Wikipedia makes its content free for people to license and reproduce wherever they see fit — including the world’s most powerful search engine. (In a statement, Wikipedia said that it had no problem with YouTube’s decision to use the encyclopedia content — though the video site should definitely have given the encyclopedia some sort of advance notice.)

It’s worth examining, then, how Wikipedia has managed to maintain its role as a reliable database without thousands of paid moderators and editors, while major, well-funded platforms like Facebook and YouTube have become the subjects of frenzied debate about misinformation.

To start with, Wikipedia’s process is collaborative — it requires broad groups of people to come to an agreement about what can be said and what can’t. On YouTube, I might make one video about the Stoneman shooting, and you might make another with a totally opposite idea of truth; they’d then duke it out in “the marketplace of ideas” (the YouTube search results). On Wikipedia, there’s only one article about the Stoneman shooting, and it’s created by a group of people discussing and debating the best way to present information in a singular way, suggesting and sometimes voting on changes to a point where enough people are satisfied.

Importantly, that discussion is both entirely transparent, and at the same time “behind the scenes.” The “Talk” pages on which editorial decisions are made are prominently linked to on every entry. Anyone can read, access, and participate — but not many people do. This means both that the story of how an article came to be is made clear to a reader (unlike, say, algorithmic decisions made by Facebook), but also that there is less incentive for a given editor to call attention to themselves in the hopes of becoming a celebrity (unlike, say, the YouTube-star economy).

Wikipedia articles also have stringent requirements for what information can be included. The three main tenets are that (1) information on the site be presented in a neutral point of view, (2) be verified by an outside source, and (3) not be based on original research. Each of these can be quibbled with (what does “neutral” mean?), and plenty of questionable statements slip through — but, luckily, you probably know that they’re questionable because of the infamous “[citation needed]” superscript that peppers the website.

Actual misinformation, meanwhile, is dealt with directly. Consider how the editors treat conspiracy theories. “Fringe theories may be mentioned, but only with the weight accorded to them in the reliable sources being cited,” Wikimedia tweeted in an explanatory thread earlier this week. In contrast, platform companies have spent much of the last year talking about maintaining their role as a platform for “all viewpoints,” and through design and presentation, they flatten everything users post to carry the same weight. A documentary on YouTube is presented in the exact same manner as an Infowars video, and until now, YouTube has felt no responsibility to draw distinctions.

But really, I’d argue that Wikipedia’s biggest asset is its willingness as a community and website to “delete.” It’s that simple. If there’s bad information, or info that’s just useless, Wikipedia’s regulatory system has the ability to discard it.

Deleting data is antithetical to data-reliant companies like Facebook and Google (which owns YouTube). This is because they are heavily invested in machine learning, which requires almost incomprehensibly large data sets on which to train programs so that they can eventually operate autonomously. The more pictures of cats there are online, the easier it is to train a computer to recognize a cat. For Facebook and Google, the idea of deleting data is sacrilege. Their solutions to fake news and misinformation has been to throw more data at the problem: third-party fact-checkers and “disputed” flags giving equal weight to every side of a debate that really only has one.

To hear Facebook and Google tell it, “fake news” accounts for less than one percent of everything uploaded to their platforms. In that sense, the risk of actually deleting unproven conspiracy theories is minimal, and it actually improves the integrity of the data set these companies use to build machine-learning systems (remember when Microsoft’s Twitter bot turned racist in less than a day because users kept sending it racist tweets?). Instead of adding “Well, Wikipedia says …” next to bad videos, it might be time for major platforms to get over their fear of the delete key.

But on the business side, all of this data helps these companies sell targeted ads. Which is one of the final points in Wikipedia’s favor. The open encyclopedia, as a donation- and grant-funded nonprofit, has no incentive to generate revenue. It’s therefore not caught up in the advertising business, which means it has no need to ensure that users spend a lot of time on the site, which means it’s never fallen prey to the defining techniques of the social web — extremism, sensationalization, clickbait, misleading titles and thumbnails, and so on.

None of which is to say that Wikipedia is, by any means, perfect. In fact, just as the design of its institution and platform means that it is a more informative and accurate site than YouTube, it can also encourage bad and unfortunate behavior. As an online space dedicated to facts above all else, and where editorial decisions are made through conversation and voting, it can be a place where only the loudest and most persistent and self-confident users feel comfortable. Wikipedia, like every organization, struggles with bias: Its editors are often male and white.

And its openness can be taken advantage of — in the wake of breaking news, Wikipedia articles are often defaced (usually for comedic effect) or the setting for editorial arguments, placing it in a publishing role it wasn’t initially designed for. Notorious pages like “Race and IQ” have been the setting of some grossly bigoted, not to mention absolutely incorrect, edit wars.

But Wikipedia is still evolving, and as the site has evolved and grown, it’s gotten better. The phenomenon of ideological extremists holding sway over individual and highly contentious articles has largely ended, in part because Wikipedia is designed to make such domination very difficult. And anyway, if you want to learn more — or complain — here’s a Wikipedia entry called “Criticism of Wikipedia.”