On Sunday, The Wall Street Journal reported that Facebook is considering using internal tools that would be put into place if postelection violence occurs in the United States. The measures reportedly include “slowing the spread of viral content and lowering the bar for suppressing potentially inflammatory posts,” two options that Pivot hosts Kara Swisher and Scott Galloway consider vital to the regular operation of a private platform on which hundreds of millions of users get their news. On the latest episode of the podcast, the pair examines Facebook’s reported plan for the election, why it shouldn’t be considered censorship, and how the company’s strategy fits into the context of potential antitrust enforcement against big tech.

Kara Swisher: Facebook is prepping for postelection unrest using the same tools for what it calls “at risk” countries. We’re now an at-risk country, joining other places like Sri Lanka and Myanmar where these tools have been used before.

I’m glad it’s doing this, but boy, it caused it. It’s trying to put out a fire it caused. What do you think about this?

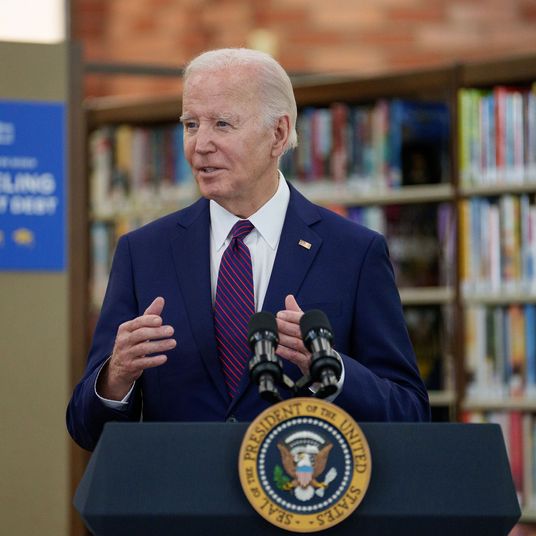

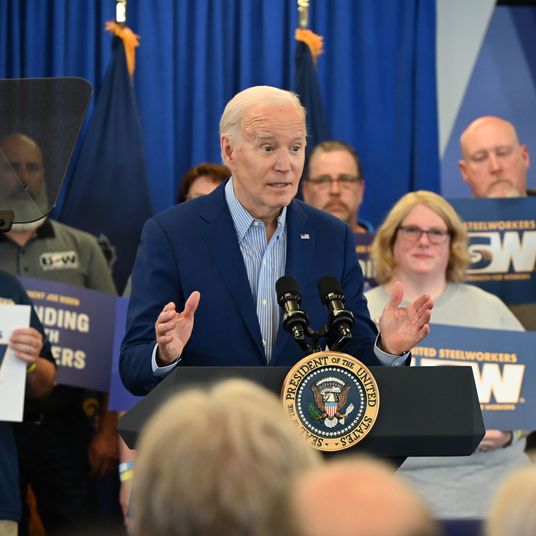

Scott Galloway: Well, I think it’s 11 a.m. on the Sunday after the rager you had as a senior in high school, and your parents sent you a text saying they decided to come home early, and you are scrambling to clean up the mess. Because the parents — in this metaphor, they’re named Biden and Harris — are up ten points and are on their way. So that kind of postelection thinking gets factored into Facebook’s decision-making.

Meanwhile, the New York University Ad Observatory has been collecting publicly available data on the platform showing what political ads are running. And Facebook is trying to say that the project — showing people what sort of ads are running in places like Pennsylvania and who is paying for them — violates its terms of service. What this says is that Facebook recognizes that NYU is probably going to find out, “Yeah, people were leveraging our data and our tools to suppress the vote,” or, “People were leveraging our tools and our technology to spread misinformation. And we’d rather that be as opaque as possible and to roll out as slowly as possible.”

Not only that, if we find out — and I believe we’re going to — that it fucked with what is the most important or seminal moments in a free society for the second time in a row, and maybe [Facebook] didn’t promote it, maybe it wasn’t its intention, maybe it would have rather it didn’t happen.

Swisher: Pitchfork time.

Subscribe on:

Galloway: If [Facebook] traded off security for letting people do it, or it traded off revenues for allowing it to happen, there’s going to be hell to pay. When dad and mom get home, it is going to be ugly.

Swisher: Except if it leads to a Trump win. Because Trump will do nothing.

Galloway: I genuinely believe that a lot of senior executives are not only worrying about their share price, but I think are starting to probably believe, Wow, I personally could probably get in a lot of hot water here. It’s possible they are really hoping for a Trump reelection. I think Jack Dorsey and Mark Zuckerberg have more riding on Trump’s reelection than almost anyone, other than maybe Putin and Borat and Rachel Maddow.

Swisher: Why wait until after the election to create these measures?

Galloway: Well, that’s exactly the right question, Kara. Why aren’t these measures a part of its policy? Why are these even measures? Why isn’t this just policy? It’s talking about reducing the virality of inflammatory content. Well, that sounds like a reasonable policy for any media company, period, not just around an election. Why is it something we’re only contemplating postelection?

Swisher: Should there be a mute button for someone, like if Trump starts to put out stuff that’s just inaccurate?

Scott Galloway: So this gets into the whole notion of censorship. I think it’s a media company. I think media companies have biases. I think media companies are allowed to edit people. Based on their terms of service, if they had any reverence or allegiance or fidelity to their terms of service, both platforms would have shut his account off or down or kicked it off. And they didn’t, because he alone is probably generating several billion ads served. The conversation he inspires, the rage, the dialogue, the clicks, the Chobani and Nissan ads are just worth a ton of money to them.

Swisher: I think [Facebook is] culpable here if it doesn’t do the right thing. And I don’t know what I would do. I think it’s backed itself in a corner where it has to censor now, or if it doesn’t, there is damage. It has not gotten people used to the idea of who it is, that it is a private company that can do these things. So everybody now thinks Facebook is the public square. And it’s allowed that to happen; it’s put no strictures in place; it hasn’t made clear that this is its platform and this is how people are going to behave on it, and now it’s paying the price. If this is a close election and Trump starts to mess with it, I wouldn’t want to be the person to make that decision, but it’s going to have to. I don’t know what I would do.

Galloway: But you used a key word there. You used the word censoring, and I would argue that —

Swisher: I don’t think it’s censoring. It’s stopping someone from lying in a very problematic way.

Galloway: When Lesley Stahl says, “This is 60 Minutes, and we can’t put on things we can’t verify,” that is what Facebook needs to be doing. It’s not censoring. It’s taking responsibility for being a media company where two-thirds of America get their information.

Pivot is produced by Rebecca Sananes. Erica Anderson is the executive producer.

This transcript has been edited for length and clarity.